Volume 28 [community edition]

Robots beat the human world record at a Beijing half-marathon; a16z invests in MTS; $300M from BMW i Ventures, Speedrun and Techstars apps open; 20+ jobs at Novig, Suno, Replit, ElevenLabs, and more

Vol 28 TLDR

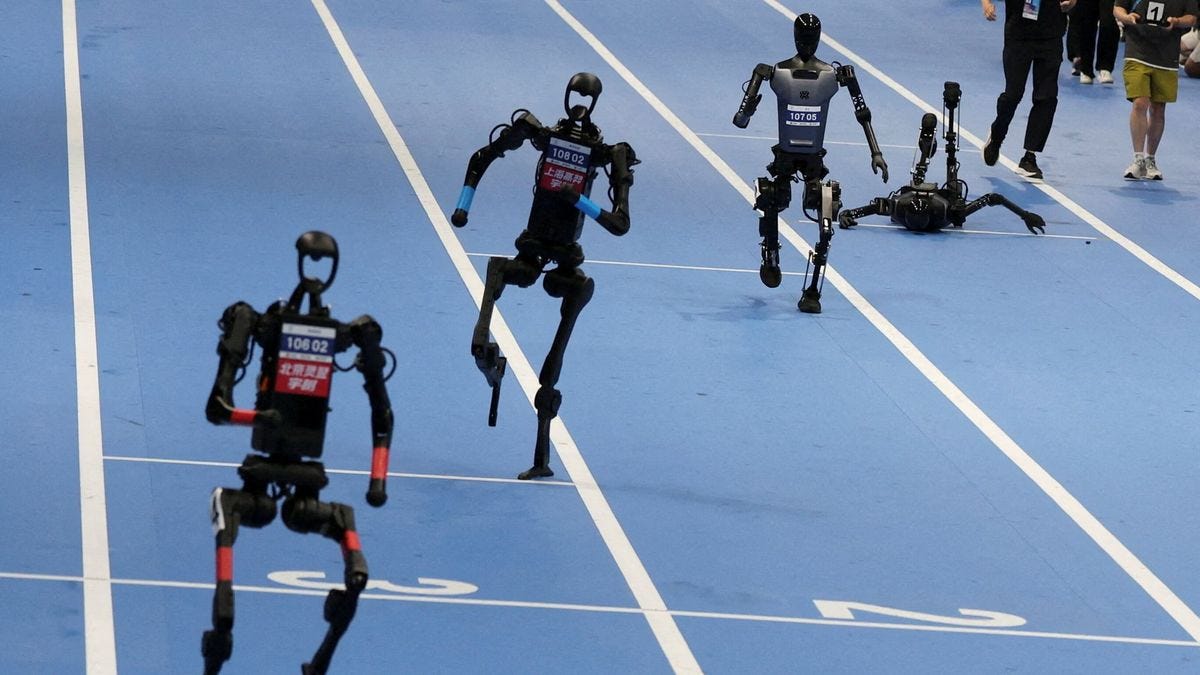

A bipedal humanoid robot ran the Beijing half-marathon in 50:26, beating all 12,000 human competitors and the human world record by nearly seven minutes.

Why robotics is finally working — and why $14B poured into the industry in 2025.

a16z invests in MTS, a 24/7 timeline-native news network on X covering tech, finance, geopolitics, and culture.

20+ jobs at Novig, Suno, Replit, ElevenLabs, and more.

📅 Coming Up...

[NYC] Game Night, 5/14 (Thursday)

For founders + startup engineers. Poker and other games.

[Boston] Tech Week Basketball Tournament, 5/27 (Wednesday)

For founders, investors, and startup teams. Spectator spots available.

⚖️ Opportunities & Resources

a16z Speedrun SR007: applications close May 17, 11:59pm PT. Up to $1M check plus $5M+ in partner credits, with the Summer/Fall cohort running July 27–Oct 11.

BMW i Ventures Fund III ($300M): BMW’s venture arm launched its third fund on April 29 with $300M for agentic AI, physical AI, robotics, manufacturing, and supply chain tech. Active deployment across Seed–Series B in North America and Europe.

AcceleratorX (Europe AI Accelerator): applications open for the first cohort kicking off May 2026, for seed-stage AI startups with a live or near-launch MVP. Offers 2,000+ first users, pan-European distribution, and warm VC intros for EU-based teams.

Google for Startups Accelerator: AI for Energy: applications close June 12 for Europe/Israel and June 30 for North America, for startups using AI to modernize grids and energy efficiency. Equity-free, with mentorship from Google engineers and access to Google’s AI ecosystem.

✍🏻 Culture Report: Can You Beat a Robot in a Race?

Written by Annie Dong.

Last Sunday, a bright red bipedal humanoid robot developed by Chinese smartphone maker Honor blazed through a half-marathon with a record-setting time of 50 minutes, 26 seconds. The robot bested all 12,000 human competitors and surpassed the human world record for a half-marathon by almost seven minutes.

Just last year, 21 humanoid robots lined up for the very same race, yet only six made it to the finish line. The winning robot completed the race in an unimpressive 2 hours and 40 minutes, after three battery changes and one fall. Other robotic runners barely got started, succumbing to glitches at the starting line.

In a single year, robots have gone from barely finishing the race to beating the human world record. That gap is indicative of the broader landscape of the robotics industry. The same acceleration witnessed on the race track is occurring in places far less visible: factory floors, construction sites, elder care facilities, and beyond.

Why Commercial Robotics Keeps Failing

Commercial robotics has had a long track record of failure. Rethink Robotics, founded by legendary MIT roboticist Rodney Brooks in 2008, raised over $150M to build a collaborative factory robot only to shut down in 2018. That same year, Jibo, a $900 social robot promised to be a “charming” personal assistant, also shut down after burning through $73M in funding. In 2019, Anki shuttered after raising a total of $500M+ to build consumer robotic toys. Mayfield Robotics’ Kuri followed. More recently, Segway discontinued its Loomo robot, and iRobot (the creator of the Roomba) filed for Chapter 11 bankruptcy last year.

These failures are united by a few underlying patterns: long, capital-intensive R&D cycles followed by high deployment costs; painful go-to-market and integration; thin hardware margins and the commodification of robot solution providers for generic tasks; and the very impenetrability of the technical challenges robotics companies face.

What Changed?

Three recent developments are breaking the deadlock, signaling that the contemporary shift toward applied AI in the physical world may be structurally different from prior waves.

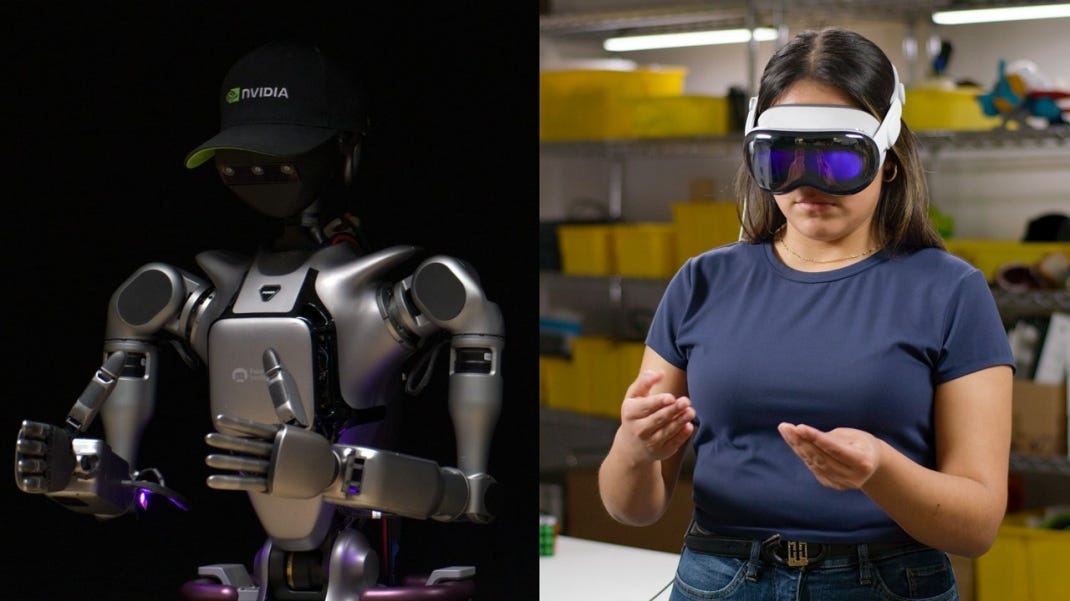

Foundational models for physical actions are here. Previously, every behavior had to be hand-engineered. Now, Vision-Language-Action (VLA) models allow for generalization by sitting on top of pretrained Vision-Language Models (VLMs), mapping internal representations of images and language instructions to motor commands.

Robots can now learn from their own failures. Prior systems used imitation learning, which had no mechanism to trace incorrect final outcomes back to earlier mistakes. Reinforcement learning solves this by training a value function that scores each intermediate state by its likelihood of eventual success, so the robot can identify exactly where things went wrong and correct course.

Hardware is getting better and cheaper. Driven largely by Chinese manufacturing, industrial-grade robotics components such as dexterous hands, sensors, and actuators are improving in quality while dropping in price. Overall manufacturing costs for humanoid robots fell 40% between 2023 and 2024.

For access to the full article, subscribe here.

⚙️ Under the Hood: How Does a Robot Learn From Its Own Mistakes?

Written by Priyal Taneja.

In 2023, researchers at Toyota Research Institute spent weeks teleoperating a robot arm through hundreds of demonstrations of a single task: flipping a pancake. The robot learned to replicate the motion almost perfectly, until the pancake landed slightly off-center on the spatula. At that point, the robot had no idea what to do. It had never seen that situation in its training data, so it froze, spatula in the air, pancake on the floor.

This is the core limitation of how robots have learned for most of the field’s history. The dominant approach, imitation learning, works by recording a human performing a task and training the robot to copy that behavior as closely as possible. It’s intuitive, fast to get started, and works surprisingly well for straightforward, repeatable tasks. But there’s a fundamental ceiling, and the industry’s ability to move past it is a big part of why robots went from barely finishing a half-marathon last year to beating the human world record this year.

Imitation Learning is Powerful but Brittle

The idea behind imitation learning is intuitive. A human operator teleoperates a robot through a task (picking up an object, folding a towel, navigating a corridor), and the robot records every state and action pair along the way. A neural network then trains on these demonstrations using supervised learning, essentially learning to map “when I see this situation, I should do this action” from the expert’s behavior.

This approach has some real strengths:

It’s fast to get started. You don’t need to design a complex reward function or run millions of trial-and-error episodes. A few dozen high-quality demonstrations can produce a working policy.

It’s intuitive to scale. More demonstrations from more environments generally means better generalization, and collecting them is straightforward with teleoperation rigs or motion capture suits.

It sidesteps reward engineering. One of the hardest problems in robotics is defining what “success” looks like mathematically. Imitation learning bypasses that entirely by saying “just do what the human did.”

However, the approach has a deep structural limitation that becomes apparent as soon as things go slightly wrong. Imitation learning has no mechanism for self-correction. The robot learns what the right trajectory looks like, but it doesn’t understand why each step matters or what to do when it drifts off course. If the robot’s gripper slips slightly during a pick-and-place task and the object ends up in a position the demonstrations never covered, the policy has no way to recover because it was never trained on failure states. Researchers call this the covariate shift problem, meaning small errors compound over time because the robot encounters states that were never in its training data, and it has no framework for reasoning about them.

The result is a system that can replicate success but cannot diagnose or recover from failure, which is a serious problem when deploying robots in environments where things go wrong constantly.

Reinforcement Learning: Trial, Error, and Credit Assignment

Reinforcement learning takes a fundamentally different approach. Rather than copying an expert, the robot learns through interaction with its environment. It takes an action, observes the outcome, receives a reward signal (positive for progress toward the goal, negative for setbacks), and updates its policy to maximize cumulative reward over time.

The critical concept that makes RL qualitatively different from imitation learning is credit assignment, which is the ability to trace a final outcome (success or failure) back through the chain of decisions that led to it. RL does this by training a value function: a neural network that learns to estimate, for any given state, how likely the robot is to eventually succeed from that point forward.

This changes the game in a few important ways:

The robot can identify where things went wrong. If a multi-step manipulation task fails at step 8, the value function can reveal that the real mistake happened at step 3, when the robot positioned its gripper at a slightly wrong angle. Imitation learning would only know that the final outcome was bad.

The robot learns recovery behaviors. Because RL trains on failures as well as successes, the policy naturally develops strategies for correcting errors mid-task rather than giving up when it drifts off the demonstrated trajectory.

The robot discovers solutions the human never showed it. An imitation learning policy is bounded by what the demonstrator did. An RL policy is bounded by what’s physically possible. Sometimes the robot finds strategies that are more efficient or more robust than anything a human would have demonstrated.

For access to the full article, subscribe here.

🔍 Companies to Watch

Agility Robotics (Series B, $150M): builds bipedal robots that move totes and boxes in warehouses and factories. Already deployed at Amazon, GXO, Toyota, and Mercado Libre.

Machina Labs (Series C, $124M): builds AI-driven factories that manufacture complex metal parts. Their factories use AI-guided robotics to load, form, scan, trim, finish, and assemble complex structures directly from CAD.

Bedrock Robotics (Series B, $270M): bolts cameras, LiDAR, and a computing unit onto existing excavators to make them autonomous.

1X Technologies (Series B, $100M): makes a humanoid robot for the home. First company to open real consumer preorders at the price of $20,000 or $499/month with U.S. deliveries beginning this year.

Figure AI (Series C, $1B): makes general-purpose humanoid robots. Started with deployment in factory assembly lines, now scaling production and moving into consumer applications with Figure 03.

Physical Intelligence (Series A, $400M): building a general-purpose AI brain that can plug into and control any robot. The model layer that any robot company can fine-tune for their specific task rather than building intelligence from scratch.

🦄 Jobs

Novig: prediction markets sportsbook. Chief of Staff (NYC)

Softbank: global tech investor. VC Analyst (Menlo Park)

Vega: Growth and Product Engineer (NYC)

Suno: AI music generation (Mikey Shulman). Product Manager, Artists & Creators (NYC / Venice Beach)

Counsel Health: AI-enabled, physician-supervised primary care (Muthu Alagappan). Senior Product Manager, Consumer (NYC)

Comfy: open-source node-based workflow platform for generative AI (Yoland Yan). Founding Customer Success Manager (SF)

Salient: financial ops / AI loan servicing (YC W23). Senior SWE, Core Product (SF)

Afresh: AI for grocery ordering & food waste reduction (Matt Schwartz). Senior Product Manager (Remote/HQ)

Flow: community & real estate platform. Senior Backend SWE (SF / NYC)

Replit: AI-powered software development (Amjad Masad). Chief of Staff to the CEO (Foster City, CA)

ElevenLabs: AI audio & voice generation. AI Creative Producer, Creator Growth (Remote, Europe)

For access to the full job list, subscribe here.

👀 Interesting Things from This Week

a16z announced an investment in a media company called MTS: a timeline-native live news network that’s always on, monitoring tech, finance, geopolitics and culture.

See you next week,

Maggie + Jonas